My Big Bullshitty Baby

AKA THE SCIENCE AND CRITICAL THINKING MODULE, part 1

Episode 2: Don’t Send a Magician to Do a Scientist’s Job

Welcome back to Don’t Send a Magician to do a Scientist’s Job, the (currently, as of these words, 1.02 episode long) blog series about our adventures in revamping our stats/methods/skills curriculum. First post here if you wanna get caught up: BOOP.

Here in Episode 2, I wanna tell you about the super cool Science and Critical Thinking module I got to double teach this year. It was amazing fun!

We had:

A replication of a ~1800 cite Psych Science paper as an assessment – data collected on Prolific, launched in class

Guest experts opining on 4 questions about critical thinking, science, AI and developing tech, and their own work. This was amazing.

Weird and confusing memes instead of bullet points on slides.

Some activities that worked! Others that were entertaining in failure!

etc

In this post, I’ll lay out the overall goals of the module, a bit of my scattered thinking going in, and then show the overall layout of things.

Then, I’ll close with some detail on the first couple of weeks of the module…and A CLIFFHANGER [dramatic music and jarring close up zoom]

Welcome aboard!

The Goal

We wanted the students’ first semester on campus to be amazing – fun, inspiring, supportive, challenging. These first modules set the tone for an undergraduate’s entire education here. The first modules I took on various topics as an undergraduate shaped my preferences moving forward; I picked psychology as a major largely because I liked the people teaching it, and was enjoying their first peek introductory modules.

Having taught methods and stats for a damn long time, I also know that these are modules that many students dread. They’re all either boring details and memorization (methods) or worse yet, math (stats). The flipside of that: I’ve learned that this makes these modules ones where students really really appreciate when instructors make it engaging and approachable.

But good vibes doth not a curriculum make. We also wanted these modules to be practical, to show the students how they can use what we’re teaching to be better students. If you can get students scientifically and critically engaged from the outset, that flows outward to the rest of their learning: Learning how science works makes it easier to read scientific papers which makes it easier to do all the assignments that make you do that, and so forth.

We wanted modules that would positively welcome our students to Brunel Psychology, while also setting them up to succeed, to press on with their studies with a critical and intellectually humble approach to the science they’d be learning.

No biggie, right?

Caro was taking our Academic Skills module. Mícheál and I were splitting the stats module (which we were teaching as a conceptual stats module, minimal maths…go read the first post). That left me with the methodology component, which we’d designated the Science and Critical Thinking module.

Or, as it’s called in the title of this post:

My Beautiful Bullshitty Baby

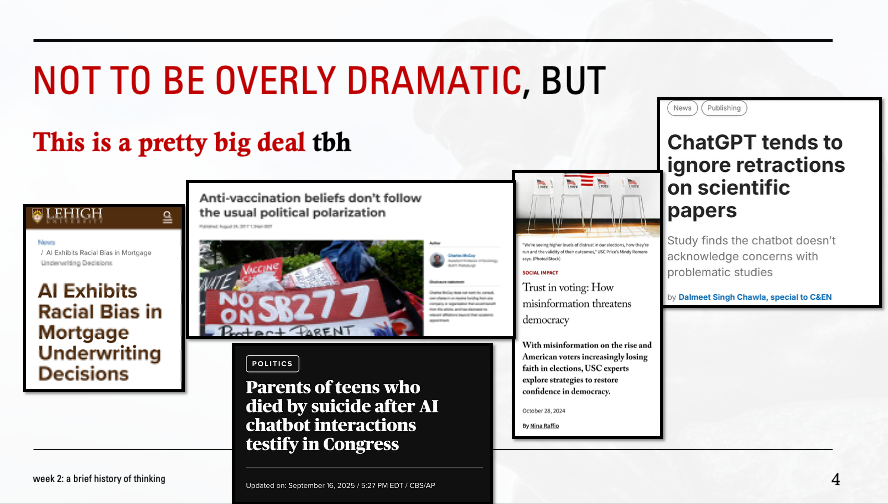

For some years now, I’ve wanted to do a hybrid cognitive science and methodology module that simultaneously teaches students a bit about how humans think (for better or worse), and how science and critical thinking are mental prostheses that help us think better. Sort of a Calling Bullshit setup, but with a more overtly psychological and cognitive science bent.

A psychology/methodology hybrid that’s my effort to teach some epistemic self-defense in an increasingly bullshitty world.

Basically, I wanted to A) show students how we can all think poorly, B) give them some tools to think better, and C) convince them to adopt a mindset where they’re applying these skills. C, naturally, is the most important – there’s a persuasive aspect to teaching critical thinking, as everyone already has skills here that they just need to kick into gear.

Beyond the science and critical thinking angle, this was also at core a methods module in a psychology course. Whenever I teach methods or stats, I try to be mindful of the fact that most of my students will not be going into careers where they are likely to be doing research. But absolutely all of them will have to think about scientific research in their lives. This shit affects them, even if they think science isn’t for them. So I always approach methodology teaching first and foremost from the perspective of a science consumer. Teaching how science is done (again, for better or worse) is important, even for students who are never planning on doing research. So I wanted to illustrate how we think while also illustrating the tools we scientists use to learn about how we think – and also to be up front with the students that science is human, and imperfect, and kinda messy. Something they probably should learn how to, ya know, think critically about.

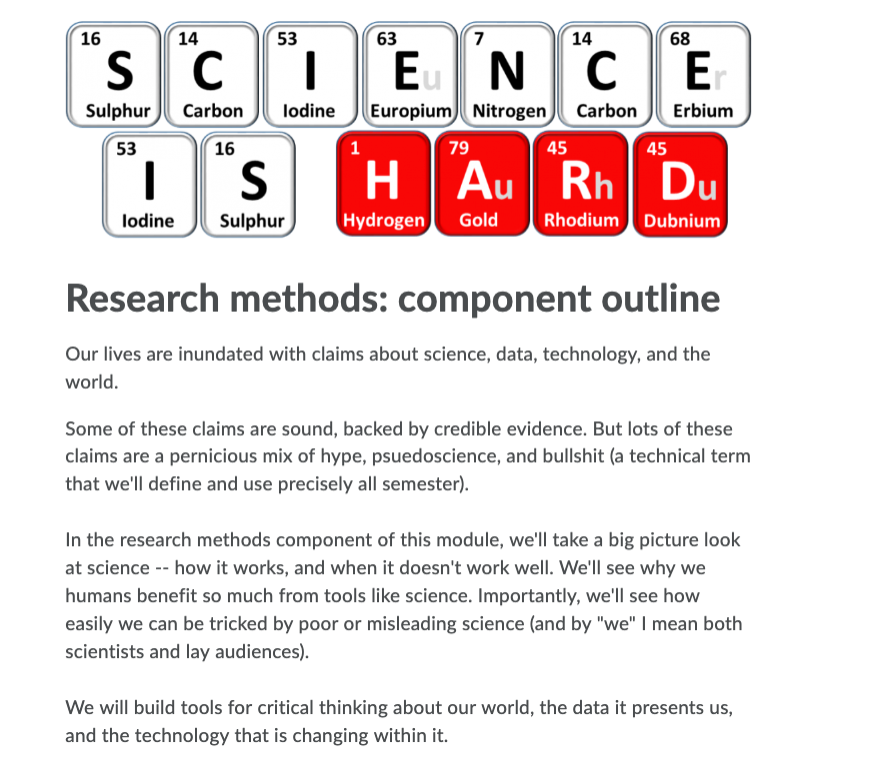

Here’s what that ended up looking like:

The Big Picture Overview

Here’s what students got when they opened Brightspace (I’m assuming with dread) to see what their first semester methods class would be:

And we’re off!

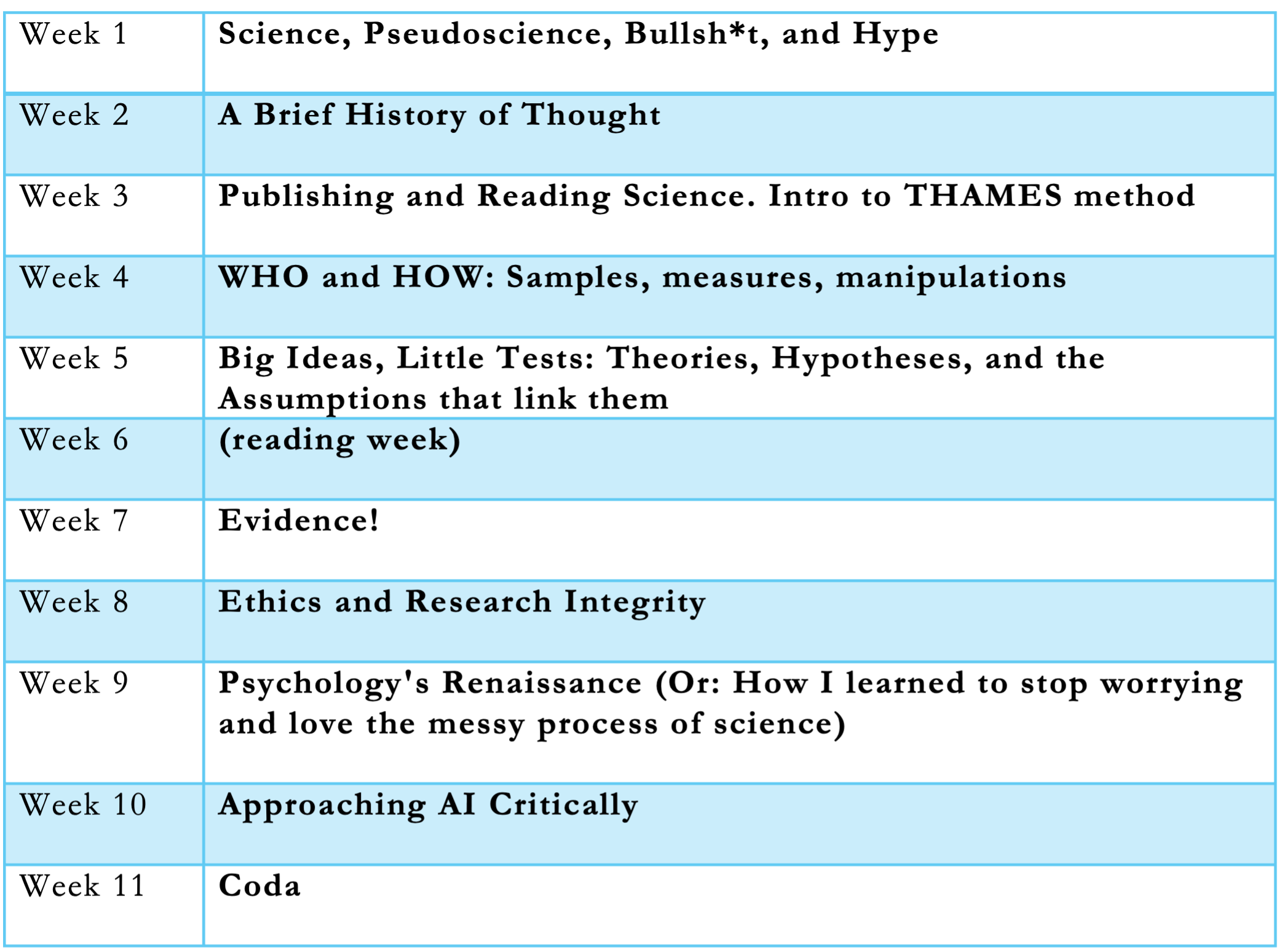

The Layout

A bit of big picture “why we need science” and then wading through the details of what makes science work well (or not). All with an eye to critical thinking about the process and outputs.

In lieu of giving a snapshot of each here, I now want to walk y’all through the first couple weeks of the module. This will set things up nicely for the next (and perhaps most important) blog post, about the THAMES thing we developed – it’s super cool, you’ll love it I promise. But it ended up being the backbone of the module, and a lot of the week 4-8ish material won’t make sense until we explain it.

In the next post (spoiler).

In the meantime: First couple weeks ahoy!

Week 1: Science, Pseudoscience, Bullsh*t, and Hype

Welcome to university everyone!

First lecture we did some cool activities to illustrate some things about our thinking, and about science. An absolutely killer confirmation bias example worked great, because it always works great[1]. An attempt at illustrating publication bias with M&M color estimation in sorted samples went pretty well! A couple other examples didn’t work great, and took up too much time. But everyone seemed pretty engaged.

We wrapped up with some definitions.

I did not want my students approaching science from some Popper for Complete Ignoramuses naïve falsificationism philosophy of science-lite perspective. I wanted this module to wallow in science as it’s actually practiced – not as a cookie cutter process or as an infallible authority we ought defer to, but as a complex set of cognitive tricks and cultural norms around evidence that works amazing well! (sometimes, maybe even usually, but not always)

Psuedoscience has scientific pretensions and wants naïve scientific epistemic deference, but without playing by the rules that get you there. Nice try.

Then we talked bullshit for a bit.

bullshit as truth-noncaring but confident

With hype of course being the gasoline on a fire, whether that’s a science fire, a pseudoscience fire, or a bullshit fire. Hype just burns, it doesn’t just target claims with certain evidentiary standards.

burn mfer burn

End of week 1!

Right out of the gate, and continually throughout the module, I emphasized that science is inherently social and cultural, inherently human, and thus inherently imperfect. It’s the best tool we’ve got for answering lots of questions! But it’s also inextricably human.

And you’ve met them, right – the humans? Yikes!

Week 2: A Brief History of Thinking

Looks like it’s a Netflix true crime documentary about Auguste Rodin’s dark side. But it’s NOT.

It’s thinking about thinking, to help convince people to think better. Because that’s important.

Week 2. Critical thinking is ultimately about metacognition: are you using your varied mental faculties in the way you want to be using them? Are you satisfied with the reasons behind choices you’re making?

So I wanted to give everyone a whirlwind tour of thought: how we got to be a big smart (but stupid also) lumbering ape with enough cultural intelligence to develop antibiotics, fly to the moon, and destroy earth in service to Mammon build telescopes so fucking big they let us see back in time to the cosmic belch that formed our universe. Which is pretty cool if you think about it.

But how do we do that?

And if we’re so smart, then how come we ain’t?

Started both prep and lecture at the Natural History Museum, with some human evolution. I started working from NHM occasionally when I was writing my book Disbelief, and it seemed a good place to go for inspiration and lecture prep. This building is science embodied, and it’s got a great aura for inspiring teaching prep. I found myself working here a few times throughout the semester, when I hit speed bumps or mid-semester lag/fatigue.

If you want to discuss human thinking, you gotta give a shout to culture, of course. We aren’t just big and smart/stupid, we’re also cultural. We learn from each other – again, for better or worse.

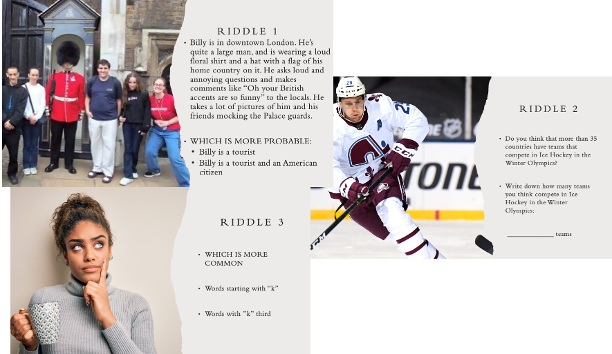

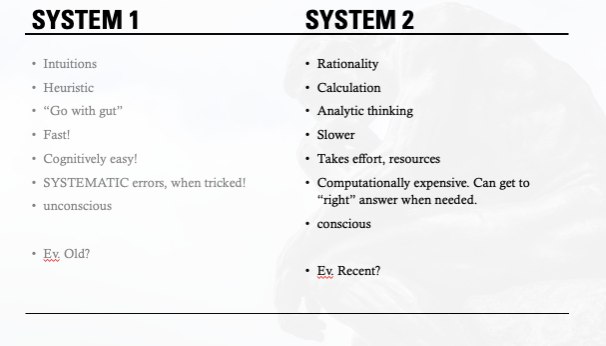

Next up was doing some activities to illustrate some heuristics and biases work – we did representativeness, anchoring, availability, all the usual suspects. Talked about System 1 and System 2 thinking, motivated cognition.

Closed with some musing and convo on how we might be able to think better. Strong hint here that science helps us systematize our critical thinking – it’s a social institution to routinize and systematize a certain style of thought. And it works amazingly well, when done right!

But…how does it get done?!?

Week 3: Publishing and Reading Science

I wanted to start digging into science from the consumer side first. Let’s say the students are hearing about a new scientific thing…what’s the pathway from the science-doer to them? What processes are there in place to ensure that the information they’re hearing is good – at the level of scientific publication, media coverage, etc.

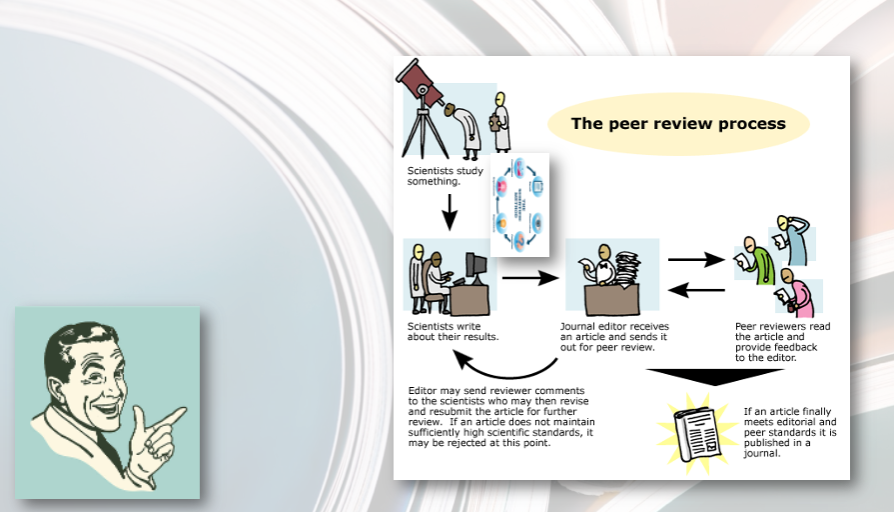

We talked about the nostalgic cookie cutter fiction of a seamless peer review process.

We want it to be a quality control process that filters good info to “good” journals. But imperfections in the publication process, plus bad faith exploiters of it (at individual or publisher side) can corrupt this process. The real thing gets messy.

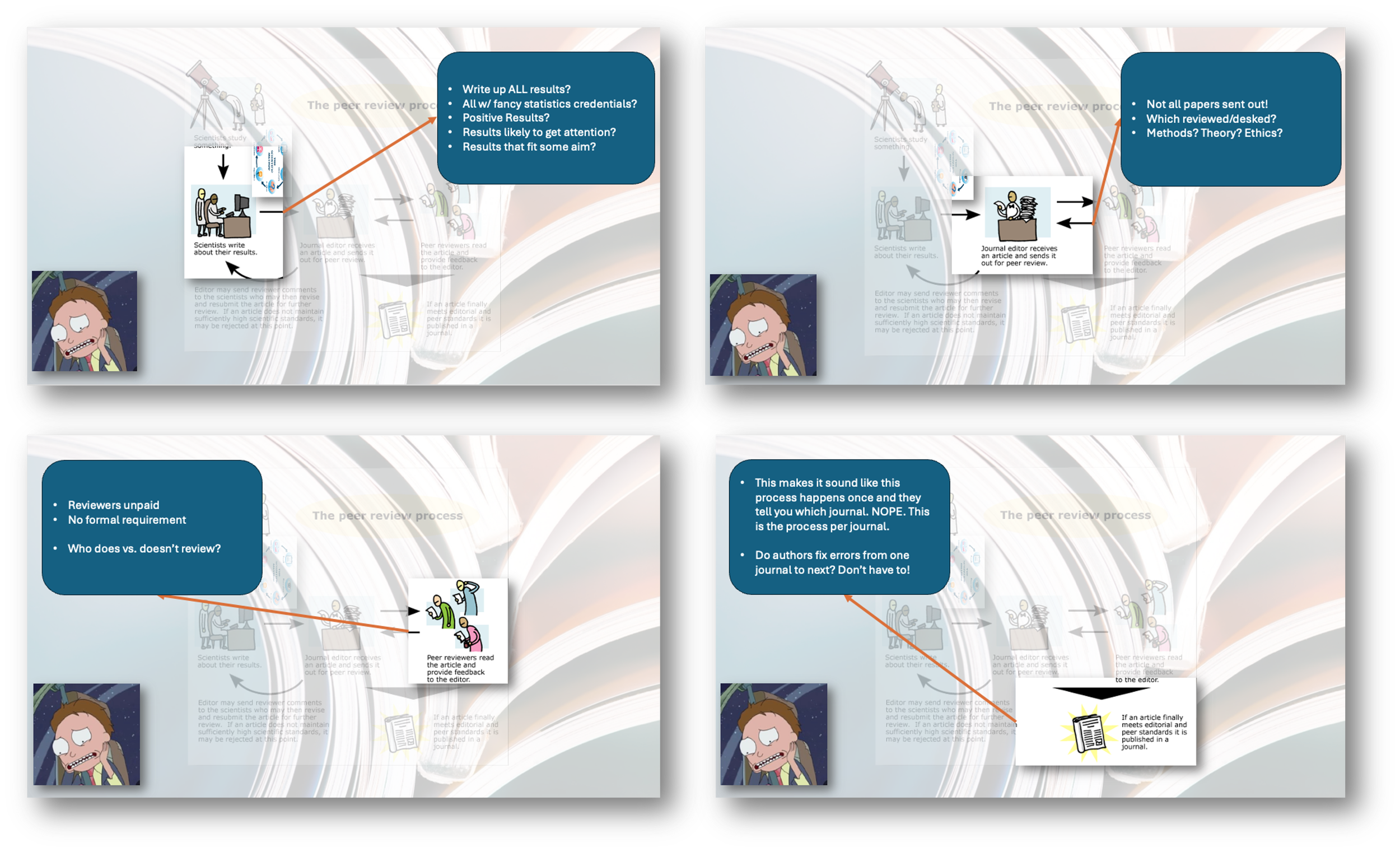

Then I had some fun with image transparency in Powerpoint[2] to do little call-outs on where peer review (actual) falls short of Peer Review™

the sausage doesn’t look so cute now, does it?

To make the imperfections of science as a process vivid, we then chatted about some prominent recent retractions and losses-of-confidence in splashy work – a couple splashy religion paper retractions, some ego depletion stuff, Gino/Arielygate, and the recent case of a paper marketed (with funding calls) about how cool open science is getting retracted because it turns out the data were actually from a meticulously documented and preregistered study on the paranormal woo of the “decline effect.”

So if you can’t tell if science is sound simply because it’s been peer-reviewed, or appears in a prestigious journal, or if it’s done by bigwigs who say the right things publicly about science and transparency, then how can you tell if science is good? Good news and bad here:

Okay, that sounds hard.

If only someone would develop a handy mnemonic/tool for helping people read and think critically about science. I bet that mnemonic could also help organize the weeks to come. And it’d be even better if it was named in a regionally appropriate manner.

What’s that you say? Exactly such a tool exists, and is rad as hell, and has an unintentionally regional name?

And I’ll tell y’all about THAMES next time…[3]

Not to overhype things, but: it’ll change your life and the lives of all of your descendents for the better, for all eternity.

PS1…. that confirmation bias example:

[1] “I’m thinking of a rule. I am going to say three numbers that illustrate the rule. Then people can try to guess the next number, according to the rule. Guess a number, and I’ll say yes or no.

The first three numbers are: 2…….4…….6…….”

Inevitably, students will guess 8 (Yes!), then 10 (Yes!), then 12 (Yes!). You cut them off around 14 or 16 or 20+ if you’re feeling lucky. Then you ask everyone to write down the rule. Then you discuss the rules. Everyone comes up with some version of “ascending evens” or “skip two.”

Then you drop the bombshell: the rule was “every number is a positive integer.” Every guess fit the rule! But nobody EVER guesses like…. 157, or -6.7, numbers that would actually TEST the rule they had in mind.

Then you’re off to the races, talking about confirmation bias!

PS2… image transparency fun

[2] (layer two identical images, make the lower one like 90% transparent. Then crop the upper one to highlight whichever call-out you want, shadow it. Voila!)

PS3…

[3] Mediocre achievement unlocked: blog post with nerdy cliffhanger ending. +4 internets to me. I bet the haters feel pretty silly right about now.