Welcome back to Don’t Send a Magician To Do A Scientist’s Job, the non-critically non-acclaimed blog series about Brunel Psychology’s adventures in teaching methods and stats better.

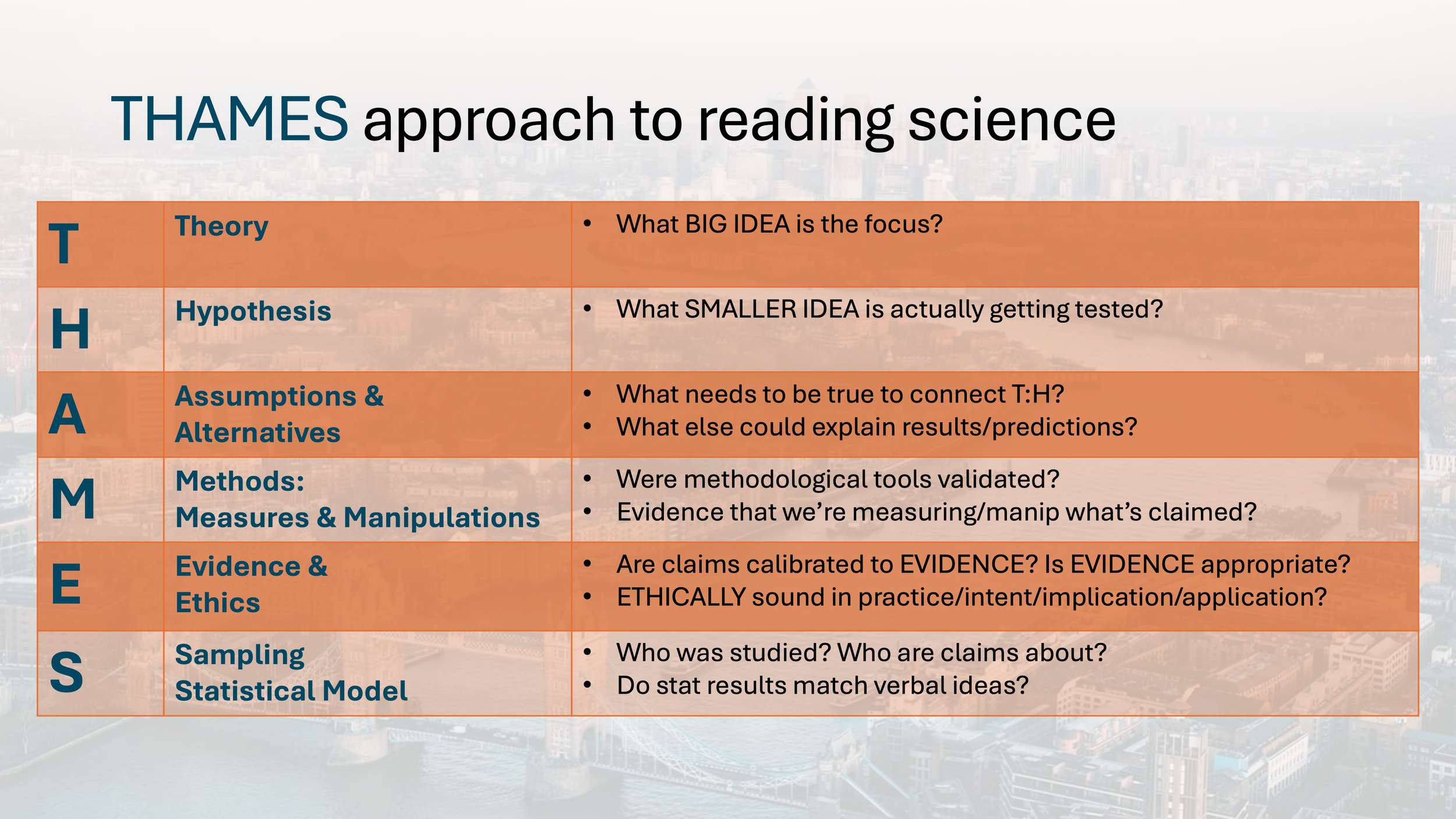

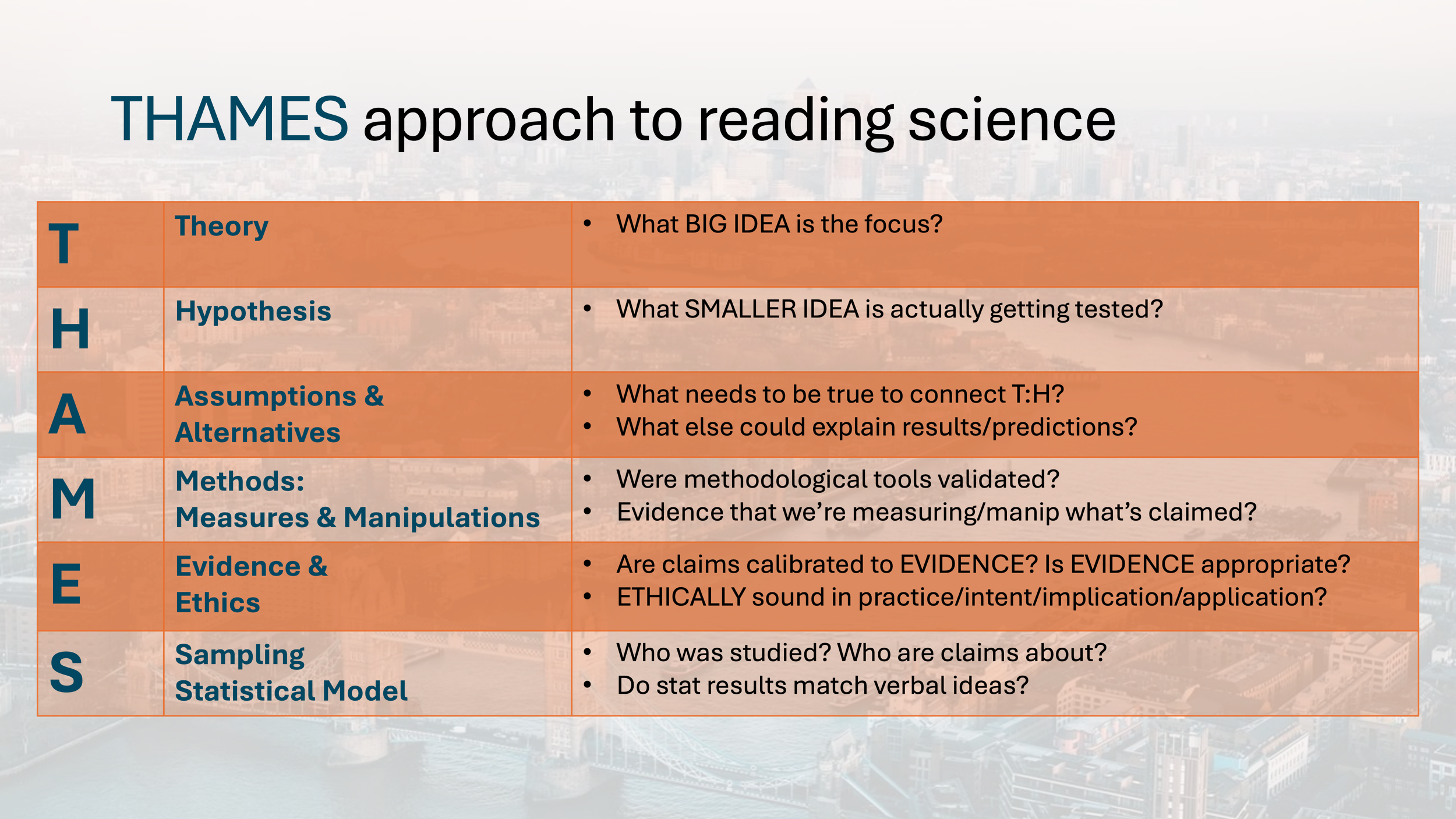

This week, we’ll be asking about how we can tell if a given bit of science is well done, trustworthy science. And we’ll be learning that the answer is THAMES.

See? Like this…

THAMES proved to be an incredibly handy teaching mnemonic and also sort of an orienting compass for the middle chunk of my Science and Critical Thinking module. So what’s THAMES and why do we need it?

Recap

POV: You’re a student in Will’s Science and Critical Thinking module. You thought it was going to be a boring methods module like you hated in A levels. The journey has seemed a little weird so far...

You learned about Science, and about Bullshit.

Then you learned that we humans are pretty smart, but also predictably stupid and prone to making really basic errors in our decision making.

Something like science might act as a mental prosthesis to help us see the world more clearly. But that only works because science is a social institution whereby we all kind of agree to play by certain rules in order to check each others’ biases.

And in principle that works great! But also, in practice it doesn’t work at all like the cookie cutter fiction of the scientific method and peer review process that we tell children about during the years we also teach them about Santa Claus.

In practice, we know that bias creeps in – inevitably, because science is done by humans. Careerist motives can lead people to cut corners, misrepresent their work. At the publisher side, the prestige market of academic publishing is highly exploitable for financial gain by publishers, with unscrupulous authors being only too willing to play along. Heck, we saw that even a team of authors on the forefront of talking about how we should do science better, more honestly, and more transparently aren’t above factually misrepresenting their science to score a glam publication that was laundered immediately into fundraising PR copy, and then publicly misrepresenting the myriad reasons for their paper’s eventual journal-forced retraction.

So if you can’t judge a paper’s scientific quality (or even basic integrity in honest reporting) by: the fact that a paper’s been peer reviewed, or published in a serious sounding journal, or has been published by famous authors, or has been published by authors who claim to value scientific integrity, then are we just stuck?

No, it just means that lazy heuristics are lazy. Science is about thinking hard, and methodically, about problems. So too for judging science – it’s naïve to think that judging science will be more mindless than doing science, just by following some silly heuristic like “big N = good” or “preregistered = good”. Science has never been that simple.

So hard thinking needed. Okay. But thinking hard about a problem is easier if you have a roadmap for what to look for. What are the key components that make up scientific quality? Where should people be looking to see what’s important and good in a scientific paper?

THAMES, that’s where

Scientific papers have their own internal logic. And they’re easy enough to read, IF…

You know how science works, enough to spot good/bad elements

You know which key bits of science are supposed to go where in the paper

You know how to find evidence for various claims made in a paper

You’re adept at telling which parts of the paper go with which sorts of scientific claims

You can put all this together

For most of us, these are skills you hone over years and years of practice.

And I wanted to give my students a crash course in all that, in about 6 weeks. THAMES emerged as a handy mnemonic for showing my students what to look for in papers. Along the way, it also gave me an opportunity to delve more deeply into various topics as we went, to give my students a clearer view of how psychological science works.

THAMES is a mnemonic for my students. It’s also a roadmap for much of my module. And I’m hoping that it’s a tool that helps my students think more clearly about science moving forward.

Enough throat clearing, here’s THAMES

Easy enough, eh? If you can remember THAMES, you can figure out what to look for as you’re reading a paper. Might even be useful things to keep in mind when writing a paper.

Instead of just giving students this tool and saying “there, go find some science and have fun” I ended up spending the following weeks developing each letter in some detail, using them as launchpads for conversations about how science can work (better or worse), depending on design choices made by researchers.

For a while, each week’s lecture would tackle some THAMES letters. Here’s what that looked like:

Big Idea, Little Test: Theories, Hypotheses, and the Assumptions that Link Them

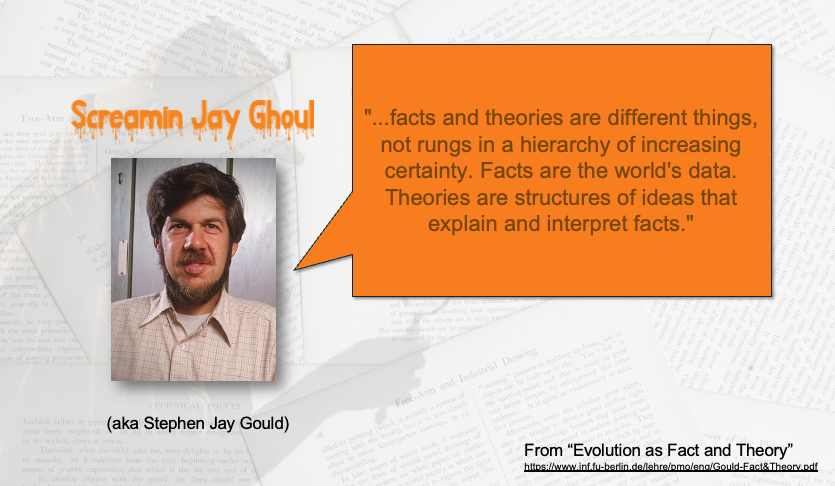

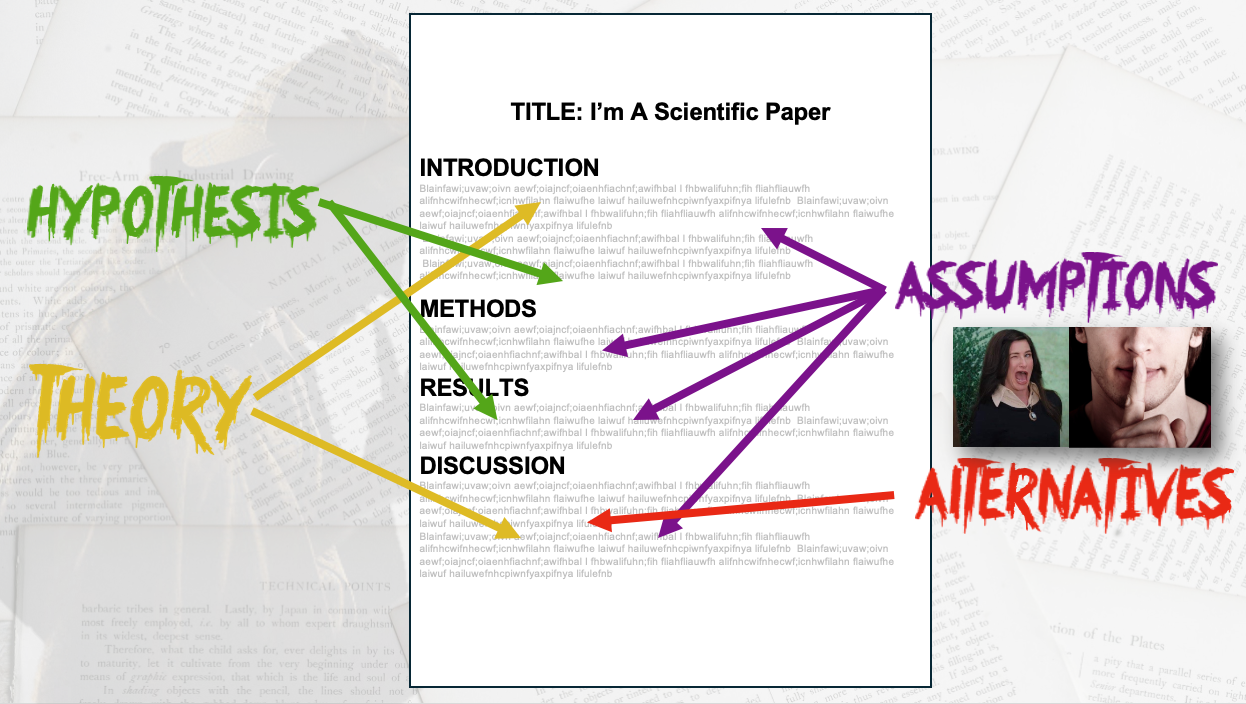

We chatted about how theories are the grand unifying conceptual structures of science, linking together observations and making sense of them. This is different from a hypothesis, which is usually the small idea being tested in a given study. This lecture ended up happening near Halloween time, so everything was a bit….thematic.

We chatted about how we judge theories in part by what they explain relative to what they assume. We like good value theories, that keep things simple (but not too simple!)

Natural selection is Theory GOAT because it explains so damn much while assuming so damn little.

And it’s those pesky ASSUMPTIONS that are often the most important thing. How can you be sure that this study tests the stated hypothesis? That the stated hypothesis has any relevance whatsoever to the stated theory?

The authors will have ASSUMED a lot of things to make their project go – some of those assumptions might be made explicit, others are likely left implicit. Hell, some are probably deliberately hidden by the authors, who really were hoping a reviewer didn’t spot it in peer review!

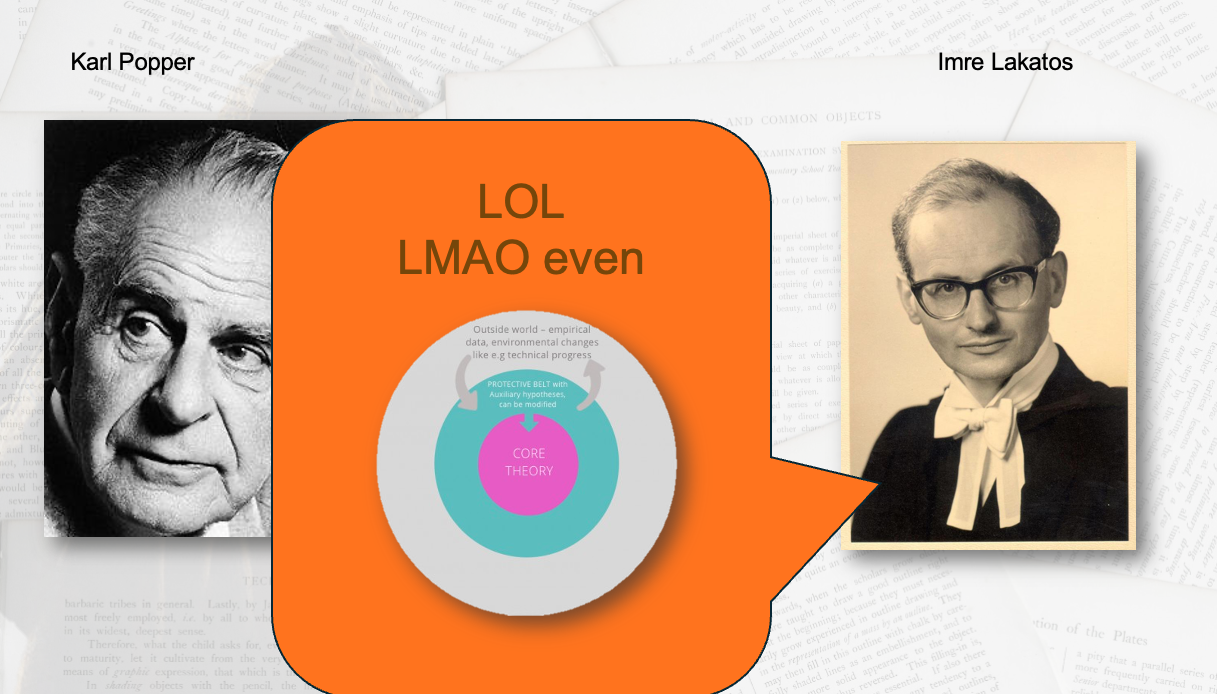

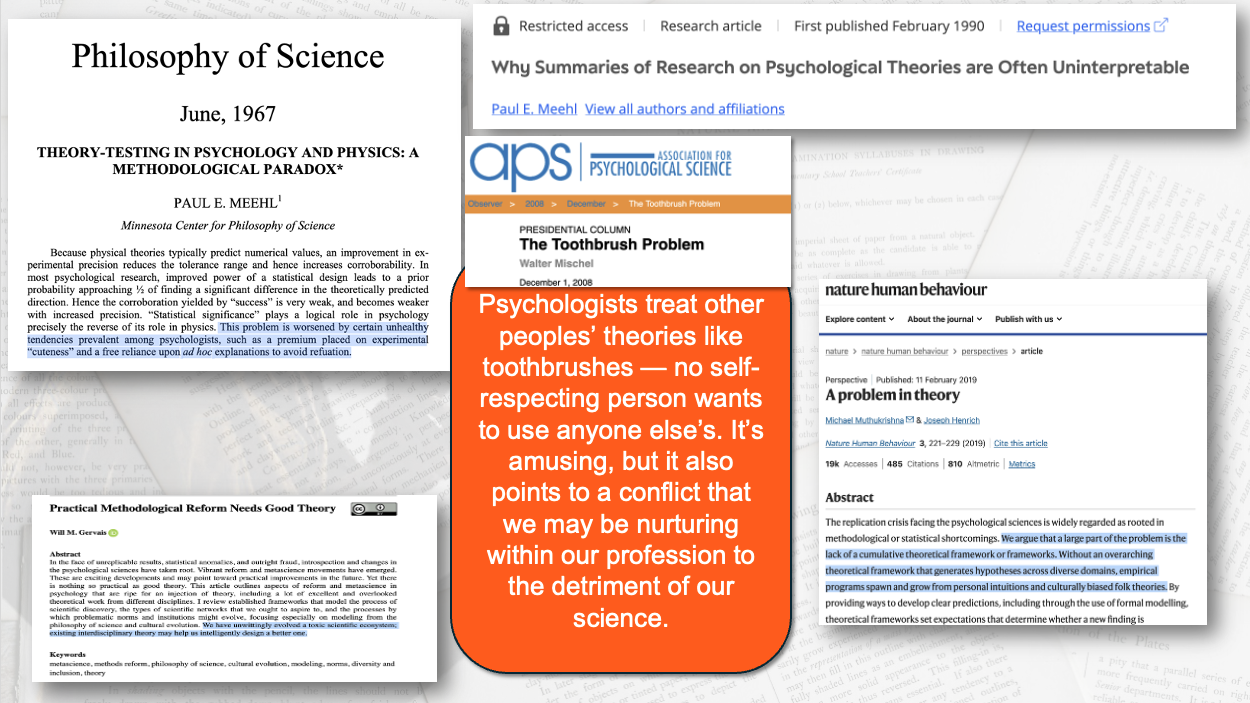

All this talk of theory, hypothesis, and assumption opened the door for a nice li’l conversation on philosophy of science; how the naïve Popperian “science is science cuz falsifiable” fiction isn’t taken all that seriously by modern philosophers of science, but that some/many/most psychs don’t read philosophy of science – some proudly!

This could be why psychology has had such an interesting relationship with the word “theory”

Looping back to the original goal of THAMES – how can students read papers better? – we closed with a nice little map of where to look for T, H , and A…with the cautionary note again that Assumptions are things that the reader will need to fill in – sometimes against the wishes of the author!

remember: lecture near Halloween. I, unfurtunately, do not use horror fonts year round. Yet.

Okay, now that the big ideas of science are sorted, let’d dig into details. What are scientists actually doing?

M Day: Measures and Manipulations

Scientists measure some stuff. Sometimes they manipulate some stuff. This seems important; they spend a lot of time doing this and seem obsessed!

But what makes a given measure or manipulation good? That’s harder.

First off, the usual vocab issues: IV, DV, etc. I tried to make that one vividly clear with this example:

After this, we talked about operationalization in general. Once a scientist settles on the ideas they want to test, the next step is picking ways to do that, for better or worse.

For example, a researcher like me might study something like “people don’t like atheists” in lots of different ways!

lots of different ways to study any given topic!

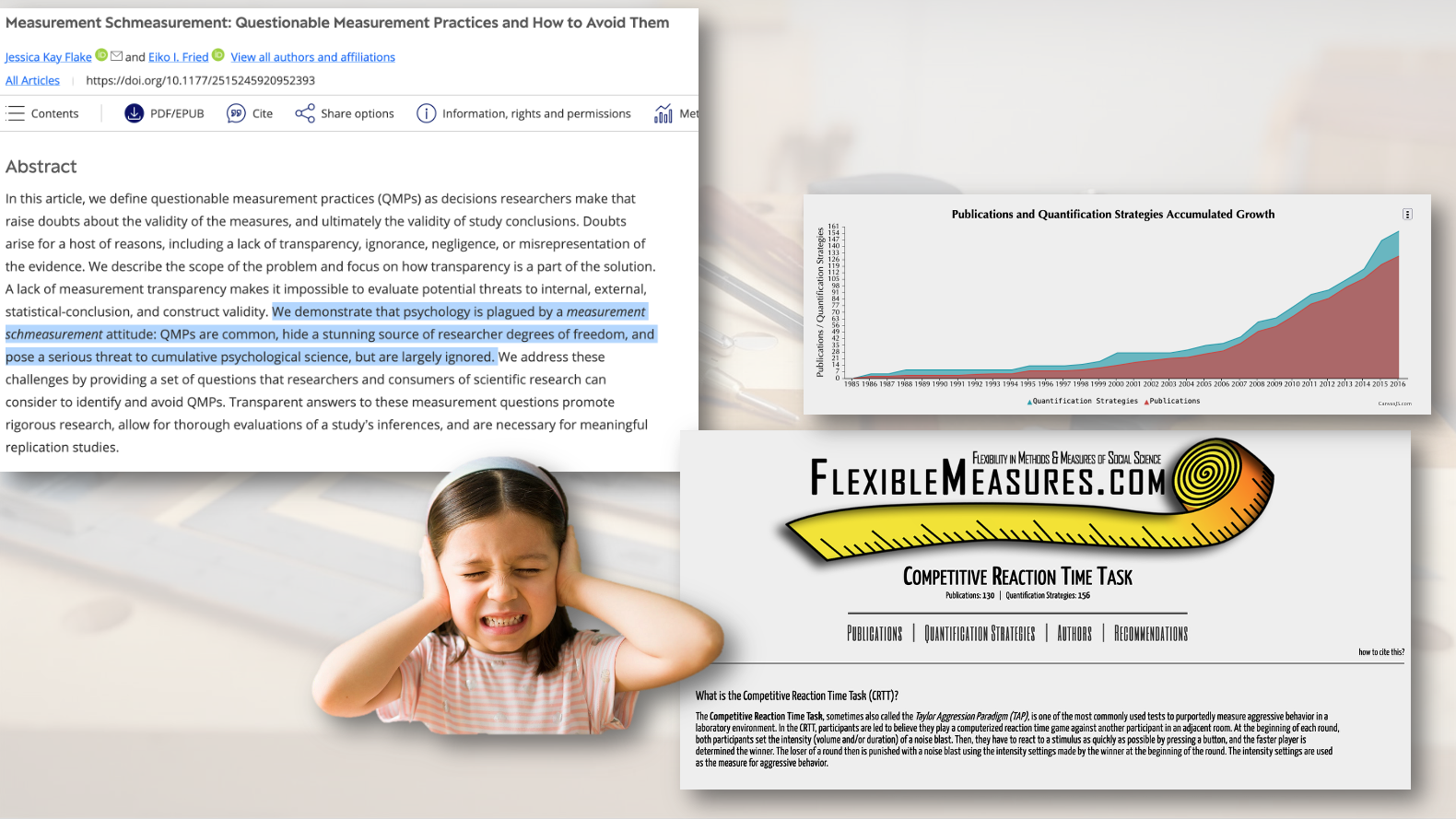

One thing I tried to hammer home is that in psychology, we don’t necessarily have loads of well-understood standardized tasks that have been well-vetted. It’s far messier and more chaotic than that — we’re inventing our tools AS WE’RE TRYING TO THEORY BUILD WITH THEM. Yikes!

On the Measurement side, we’ve got the Measurement Schmeasurement problem, jingle/jangle, and all the rest. We discussed how scientists might devise a dozen different ways to ostensibly measure the same thing. You can see this happening in lots of psychology – how many ways are there to measure aggression or prejudice or replicability? It’s a pink-to-red flag when you see authors devising new ways to measure something, only to abandon that measure for the next paper.

You see the same thing happening on the Manipulation side of things – oftentimes, the reader has no more than the author’s slick smiling assurance that the manipulation worked as advertised. Maybe some crappy ad hoc manipulation check, if you’re lucky.

We chatted about how many papers will provide no more evidence that their measure/manipulation worked than simply citing someone else who has also used these tasks – but that if you go citation hunting you’ll find that the cited paper ALSO DIDN’T VALIDATE SHIT! I reminded the students that in other walks of life “someone did this once” is not taken to be synonymous with “this is good to do.”

And we closed by talking about how it’s the author’s job to convince the reader that their methodology is appropriate….and they should be bringing evidence to that evidence fight, not mere verbal reassurances that these tools are a-okay.

The refrain in this week (and other weeks)?

REMEMBER!!! It is the AUTHOR’S JOB to persuade the READER. You don’t owe the author your credulity. They owe you evidence.

I want my students to open papers and when they get to the methods section ask “is this really measuring what they say? Do these measures/manipulations actually concern the stated hypothesis? What evidence do the authors provide to reassure me?”

S Day: Sampling

Okay, we’re going to break order a bit here and talk Sampling next. I taught this alongside the Measurement/Manipulation stuff because it’s a key part of the WHAT THEY DID part of science.

Who did the authors sample? Who were their conclusions about?

This one ended up being the students’ first WEIRD people lecture of their university careers.

You see, psychologists write Introductions as if they’re revealing generalizable truths about humans everywhere, but their Methods sections usually show that those claims are a massive overreach. Most of our work fundamentally can’t support the big claims made on behalf of convenience samples. Period. And journals and scientists have been little more than citing the WEIRD people paper about it and figuring that’s that.

Heck, students heard about some clumsy recent efforts to quantify the acronym WEIRD, which led to embarrassingly underpowered studies of culture, with substantially misinterpreted summaries (here thinking of Many Labs 2, with a design fundamentally unsuited for claims made in its abstract and marketing).

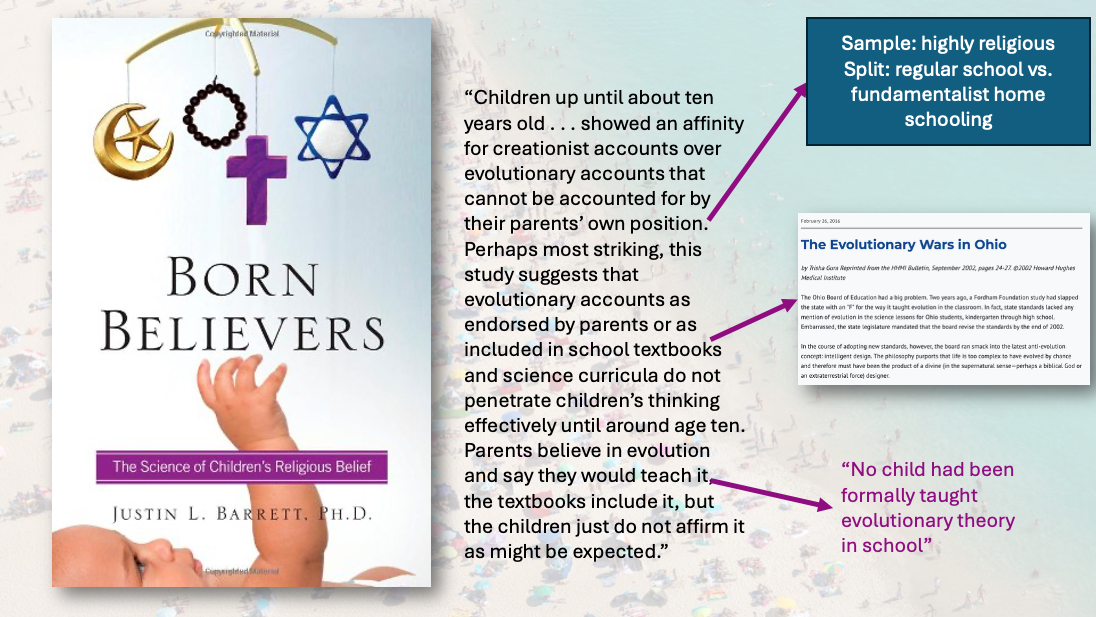

We also saw how sometimes the sampling issues end up generating misleading claims through inappropriate, methodology-illiterate summaries that ignore the actual samples that conclusions are based on. For example, I showed them a trade book by a scientist in which the author profoundly misinterprets a piece of science he chooses to feature, simply by not thinking clearly about the sample in the paper!

not good if the popular summary of a paper is directly contradicted by its sample

EVERY conclusion in a psychology paper describes a participant-by-methodology interaction. The good papers acknowledge this and account for it. The bad papers deliberately ignore this. And then there are plenty of papers that fall in between – simply not giving culture enough thought to appropriately contextualise the findings.

As with Measures/Manipulations, I encouraged my students to think critically here! THEY need to ask whether this sample fits the conclusions, because oftentimes authors won’t.

(Quick aside: i’m highlighting lots of “oops” moments for psychology, problems with how we do things. The idea was to spur critical thinking. Fret not! I also sold them on the amazing things our science is capable of. Guess what? I’m now foreshadowing yet another blog post)

Thinking clearly about measures, manipulations, and samples set up students well to start asking bigger-picture questions about EVIDENCE.

E is for EVIDENCE (and also ethics but we aren’t talking about that today)

We’ll split the ethics conversation into its own blog post (foreshadowing!). And the Statistical Model portion of S is covered in our stats modules. So that means we only have EVIDENCE to go in this post. Huzzah!

This one was a lot of fun! Started out by introducing a couple different ways we can think about EVIDENCE.

First up, evidence can be seen as the set of facts (bound by assumptions) that support a given claim/theory. And as evidence accumulates, this strengthens the theory! A theory that gathers lots of supporting evidence can grow quite strong indeed!

Next, we discussed how sometimes evidence can directly adjudicate between theories. Rarely, evidence will be so clear between alternatives that one is effectively vanquished!

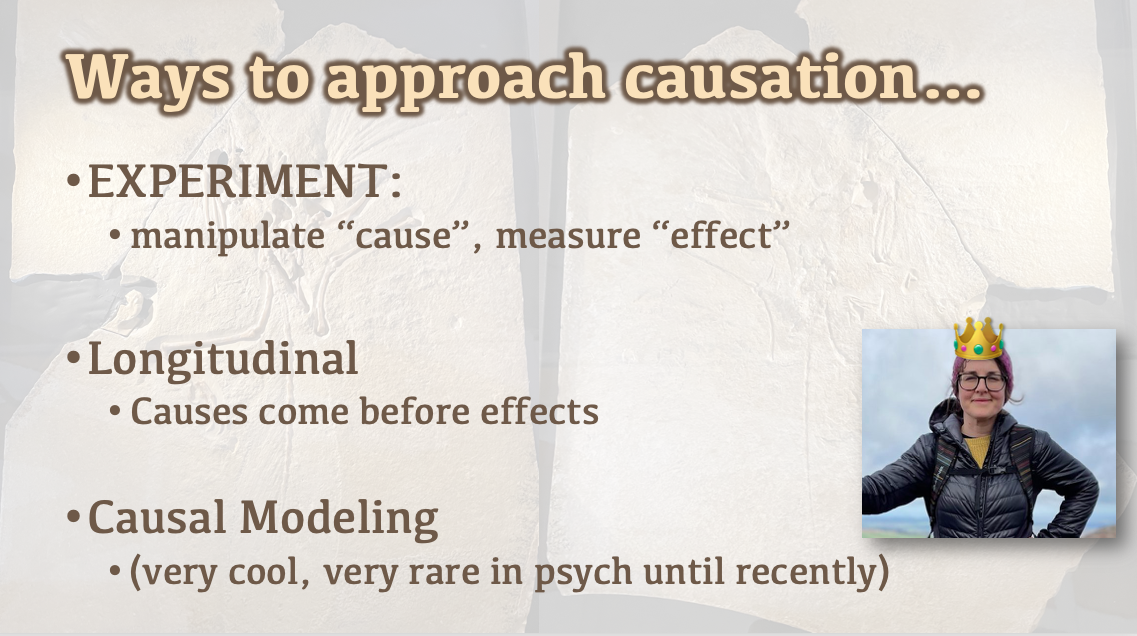

Finally, we talked about CAUSATION: how causal evidence is tricksy evidence.

Given correlational evidence alone, you really can’t tease out causation because lots of alternatives are equally consistent with the evidence you’ve got!

If you want to make a causal claim, you’ve gotta do one of a few things: run an experiment, think about time, or do some causal modeling (shout out to Queen Abbey, who is teaching some causal modelling, no DAGgity).

Remember folks: Causation causes correlation and correlation correlates with causation.

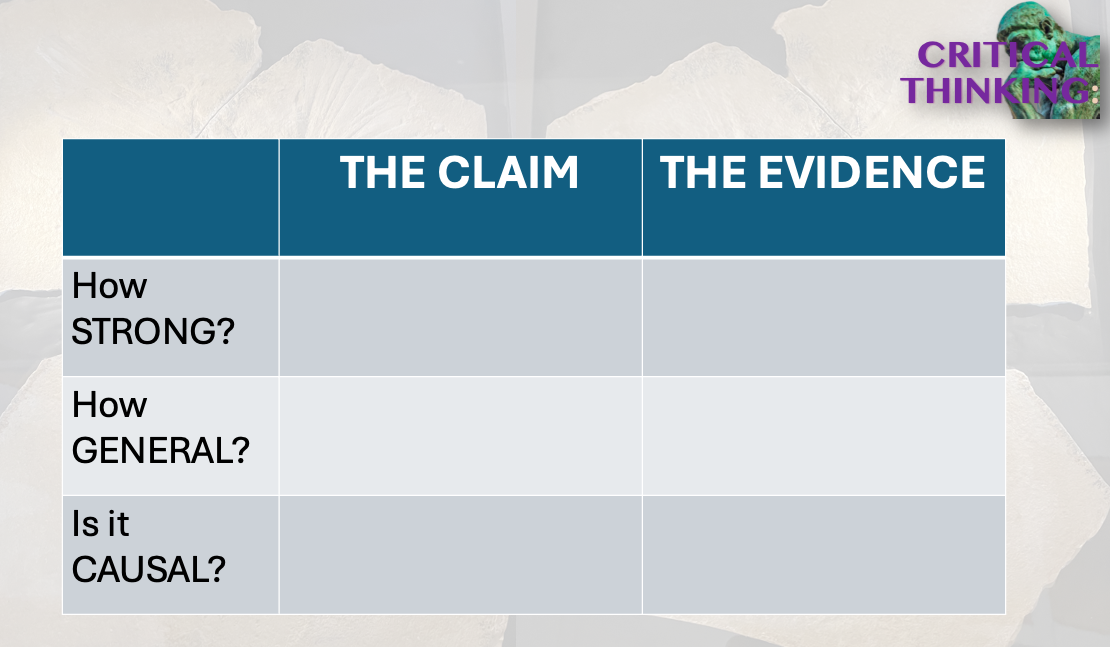

Overall, I tried to get my students thinking about evidence in terms of the claims being made in a paper. Lots of claims might be fine with a small cross-sectional convenience sample; for other claims this would be absurdly inappropriate. EVIDENCE is relative to CLAIM, which means that critical thinking is all about the fit between the two. It’s about calibration.

There you have it, with Evidence done, there’s your THAMES overview.

PRACTICE TIME

THAMES ended up covering a handful of weeks, with us drilling into various topics at various depths. I wanted it to simultaneously give them a guide for what to look for in papers AND give them a how-the-sausage-gets-made journey through science.

Don't let the name fool you, Jimmy. It's not really a floor. it's more of a steel grating that allows material to sluice through so it can be collected and exported!

To tie the whole room together at the end, I decided to do a collaborative THAMES of my most prominently shitty publication of all time, topic of this here blog post.

Students had a lot of fun seeing the issues in this one. They were, to be concise, a bit flabbergasted that this paper was published in a flash outlet. To wrap up, we had some PollEverywhere upvote/downvote on issues in that paper. Here were some winning options:

Cute Assumptions (seeing statue ➡️ System 2 thinking?)

Weak Results

Small Sample

Non-validated manipulations

Finally, I asked students to guess whether the first experiment would replicate. Across 2 lecture groups, 70-80% of students indicated that the effect would NOT replicate. Then I gave them the big reveal about how the finding doesn’t replicate. First, this paper showed that. Some years later, this one did the same. I got to talk about my silly stupid study on NPR, and the students had a grand time laughing at poor naïve grad school me and my silly little paper.

The practical take home that I left the students with:

ARMED WITH THAMES, about ¾ of them did what the 2011 Science editorial board couldn’t: spot cute, flashy, junk science. And call BULLSHIT

Not only did they have the skills to spot questionable science, hopefully they now had the confidence to do so when reading papers in other modules!

Because THAMES is the good shit. And now you know about it too.

On Reflection…

On a first pass through, THAMES really was incredibly useful! One of the more useful creations of my teaching career. It helped me organise my module. MUCH more importantly, it helped my students learn to effectively read scientific papers, while also learning how (and why!) to think critically about the papers they read.

Looking back, I think my favorite letter that we kept looping back to was the ASSUMPTIONS that separateTHAMES from THEMS. Really, it’s assumptions all the way down. Assumptions that link the theory to the hypothesis. Assumptions that link the measurement tools to the numbers they generate. Assumptions that the manipulation does what it’s billed as doing, and only that. Assumptions that the sample is appropriate . And these assumptions are the easiest things to hide, often especially from ourselves! So they’re the most important thing to think critically about! Assumptions, done well, are transparent and justified with evidence; done wrong, they are hidden, unjustified, and fatal to serious inquiry. It seemed empowering to the students that they could spot assumptions in my bad Science paper, and see their concerns vindicated by replication results. So I really hope that long after they’ve forgotten the difference between convergent and divergent validity, they remember that it’s assumptions all the way down, with posterior potential we mightn’t like for U and ME.

On the student side, it seemed very well received! They appreciated having some tangible targets to look for when approaching scientific papers. We spent some time in lecture practicing THAMES on some different examples. Then, as part of their final assessment (an upcoming blog post, believe it or not) students all did a THAMES of a highly cited Psych Science paper and predicted whether the main effect would succesfully replicate….before we ran that very replication!

As the semester went on, I started hearing from some colleagues in the department that THAMES was coming up in office hours and other modules. We’ve since shared it with the whole department, with others already helping to hone it for future use. For a first pass at a new thing in a new curriculum, this one is firmly in the WIN column so far, with promising steps already afoot for collaborative improvement.

We’re in the process of scaling it up a bit, and some of my fellow instructors here have been incorporating it into their own teaching in other modules. Hopefully y’all can find it useful too!

This one ended up being a little longer. And really, the most important part of it was right at the beginning, in this here screen shot:

THAMES helps students focus on what’s important in a paper. THAMES helped me organize my teaching. I like THAMES, hopefully you now like THAMES. Thanks for reading!

Oh bee tee dubs, here’s a worksheet that we’ve been using to teach THAMES.

PS…

THAMES, like the river right? Ugh. I kind of hate that the mnemonic acronym to help science ended up being so regionally applicable and CUTE. I didn’t want it that way!

But here’s the catch: I NEEDED THOSE FUCKING LETTERS. T and H and A fit together. M and S just make sense. Yeah, E for Evidence and Ethics, that tracks.

So my options were THAMES (boo! Regionally cutesy!), or one of these fine alternatives:

SH…MEAT (memorable for the sneaky carnivore)

AS METH

ME SHAT

So…THAMES it was

PS2…

Given the obviousness of some letters needed for the mnemonic, and the paucity of good acronyms, I’d be SHOCKED and ASTONISHED if someone else hasn’t already put a THAMES thing out there. If you’ve THAMES-scooped me, as is entirely possible/likely, that is rad as hell! I hope THAMES works well for you, and I’d love to hear more about your experiences. Not trying to claim credit on this one, as it seems like a pretty sensible way to organize these thoughts…if you’re a pre-me THAMES originator, drop me a line!

Welcome to the THAMES Team, it’s THAMES Time